It ought to be clear to everybody that the Google documentation leak and the general public paperwork from antitrust hearings do not likely inform us precisely how the rankings work.

The construction of natural search outcomes is now so advanced – not least attributable to using machine studying – that even the Google workers who work on the rating algorithms say they will now not clarify why successful is at one or two. We have no idea the weighting of the numerous indicators and the precise interaction.

However, it is very important familiarize your self with the construction of the search engine to know why well-optimized pages don’t rank or, conversely, why seemingly brief and non-optimized outcomes typically seem on the high of the rankings. Crucial side is that you have to broaden your view of what’s actually essential.

All the data accessible clearly exhibits that. Anybody who’s even marginally concerned with rating ought to incorporate these findings into their very own mindset. You will note your web sites from a very completely different standpoint and incorporate further metrics into your analyses, planning and choices.

To be sincere, this can be very troublesome to attract a very legitimate image of the techniques’ construction. The data on the internet is kind of completely different in its interpretation and typically differs in phrases, though the identical factor is supposed.

An instance: The system accountable for constructing a SERP (search outcomes web page) that optimizes house use is known as Tangram. In some Google paperwork, nonetheless, additionally it is known as Tetris, which might be a reference to the well-known sport.

Over weeks of detailed work, I’ve considered, analyzed, structured, discarded and restructured nearly 100 paperwork many instances.

This text will not be meant to be exhaustive or strictly correct. It represents my finest effort (i.e., “to the best of my knowledge and belief”) and a little bit of Inspector Columbo’s investigative spirit. The result’s what you see right here.

A brand new doc ready for Googlebot’s go to

While you publish a brand new web site, it’s not listed instantly. Google should first turn into conscious of the URL. This normally occurs both through an up to date sitemap or through a hyperlink positioned there from an already-known URL.

Regularly visited pages, such because the homepage, naturally convey this hyperlink info to Google’s consideration extra rapidly.

The trawler system retrieves new content material and retains observe of when to revisit the URL to test for updates. That is managed by a element known as the scheduler. The shop server decides whether or not the URL is forwarded or whether or not it’s positioned within the sandbox.

Google denies the existence of this field, however the current leaks counsel that (suspected) spam websites and low-value websites are positioned there. It ought to be talked about that Google apparently forwards a few of the spam, in all probability for additional evaluation to coach its algorithms.

Our fictitious doc passes this barrier. Outgoing hyperlinks from our doc are extracted and sorted in keeping with inside or exterior outgoing. Different techniques primarily use this info for hyperlink evaluation and PageRank calculation. (Extra on this later.)

Hyperlinks to photographs are transferred to the ImageBot, which calls them up, typically with a major delay, and they’re positioned (along with an identical or related photographs) in a picture container. Trawler apparently makes use of its personal PageRank to regulate the crawl frequency. If an internet site has extra site visitors, this crawl frequency will increase (ClientTrafficFraction).

Alexandria: The good library

Google’s indexing system, known as Alexandria, assigns a novel DocID to every piece of content material. If the content material is already recognized, similar to within the case of duplicates, a brand new ID will not be created; as a substitute, the URL is linked to the present DocID.

Vital: Google differentiates between a URL and a doc. A doc could be made up of a number of URLs that include related content material, together with completely different language variations, if they’re correctly marked. URLs from different domains are additionally sorted right here. All of the indicators from these URLs are utilized through the widespread DocID.

For duplicate content material, Google selects the canonical model, which seems in search rankings. This additionally explains why different URLs might typically rank equally; the willpower of the “original” (canonical) URL can change over time.

As there’s solely this one model of our doc on the internet, it’s given its personal DocID.

Particular person segments of our web site are looked for related key phrase phrases and pushed into the search index. There, the “hit list” (all of the essential phrases on the web page) is first despatched to the direct index, which summarizes the key phrases that happen a number of instances per web page.

Now an essential step takes place. The person key phrase phrases are built-in into the phrase catalog of the inverted index (phrase index). The phrase pencil and all essential paperwork containing this phrase are already listed there.

In easy phrases, as our doc prominently accommodates the phrase pencil a number of instances, it’s now listed within the phrase index with its DocID underneath the entry “pencil.”

The DocID is assigned an algorithmically calculated IR (info retrieval) rating for pencil, later used for inclusion within the Posting Checklist. In our doc, for instance, the phrase pencil has been marked in daring within the textual content and is contained in H1 (saved in AvrTermWeight). Such and different indicators enhance the IR rating.

Google strikes paperwork thought of essential to the so-called HiveMind, i.e., the principle reminiscence. Google makes use of each quick SSDs and standard HDDs (known as TeraGoogle) for long-term storage of data that doesn’t require fast entry. Paperwork and indicators are saved in the principle reminiscence.

Notably, specialists estimate that earlier than the current AI growth, about half of the world’s internet servers have been housed at Google. An unlimited community of interconnected clusters permits tens of millions of principal reminiscence models to work collectively. A Google engineer as soon as famous at a convention that, in idea, Google’s principal reminiscence may retailer all the internet.

It’s attention-grabbing to notice that hyperlinks, together with backlinks, saved in HiveMind appear to hold considerably extra weight. For instance, hyperlinks from essential paperwork are given a lot larger significance, whereas hyperlinks from URLs in TeraGoogle (HDD) could also be weighted much less or probably not thought of in any respect.

- Trace: Present your paperwork with believable and constant date values. BylineDate (date within the supply code), syntaticDate (extracted date from URL and/or title) and semanticDate (taken from the readable content material) are used, amongst others.

- Faking topicality by altering the date can definitely result in downranking (demotion). The lastSignificantUpdate attribute data when the final important change was made to a doc. Fixing minor particulars or typos doesn’t have an effect on this counter.

Further info and indicators for every DocID are saved dynamically within the repository (PerDocData). Many techniques entry this later on the subject of fine-tuning relevance. It’s helpful to know that the final 20 variations of a doc are saved there (through CrawlerChangerateURLHistory).

Google has the power to judge and assess adjustments over time. If you wish to utterly change the content material or matter of a doc, you’d theoretically have to create 20 intermediate variations to override the previous content material indicators. For this reason reviving an expired area (a website that was beforehand energetic however has since been deserted or bought, maybe attributable to insolvency) doesn’t provide any rating benefit.

If a website’s Admin-C adjustments and its thematic content material adjustments on the similar time, a machine can simply acknowledge this at this level. Google then units all indicators to zero, and the supposedly useful previous area now not presents any benefits over a very newly registered area.

QBST: Somebody is in search of ‘pencil’

When somebody enters “pencil” as a search time period in Google, QBST begins its work. The search phrase is analyzed, and if it accommodates a number of phrases, the related ones are despatched to the phrase index for retrieval.

The method of time period weighting is kind of advanced, involving techniques like RankBrain, DeepRank (previously BERT) and RankEmbeddedBERT. The related phrases, similar to “pencil,” are then handed on to the Ascorer for additional processing.

Ascorer: The ‘green ring’ is created

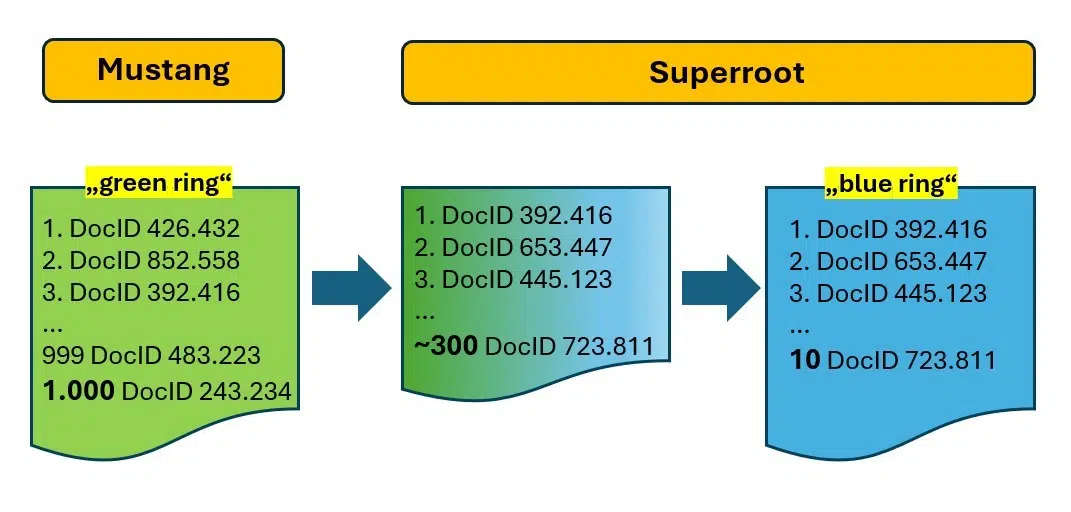

The Ascorer retrieves the highest 1,000 DocIDs for “pencil” from the inverted index, ranked by IR rating. In response to inside paperwork, this record is known as a “green ring.” Throughout the trade, it is called a posting record.

The Ascorer is a part of a rating system referred to as Mustang, the place additional filtering happens via strategies similar to deduplication utilizing SimHash (a sort of doc fingerprint), passage evaluation, techniques for recognizing unique and useful content material, and so on. The objective is to refine the 1,000 candidates all the way down to the “10 blue links” or the “blue ring.”

Our doc about pencils is on the posting record, at the moment ranked at 132. With out further techniques, this might be its closing place.

Superroot: Flip 1,000 into 10!

The Superroot system is accountable for re-ranking, finishing up the precision work of decreasing the “green ring” (1,000 DocIDs) to the “blue ring” with solely 10 outcomes.

Twiddlers and NavBoost carry out this job. Different techniques are in all probability in use right here, however their precise particulars are unclear attributable to imprecise info.

- Google Caffeine now not exists on this kind. Solely the title has remained.

- Google now works with numerous micro-services that talk with one another and generate attributes for paperwork which are used as indicators by all kinds of rating and re-ranking techniques and with which the neural networks are educated to make predictions.

Filter after filter: The Twiddlers

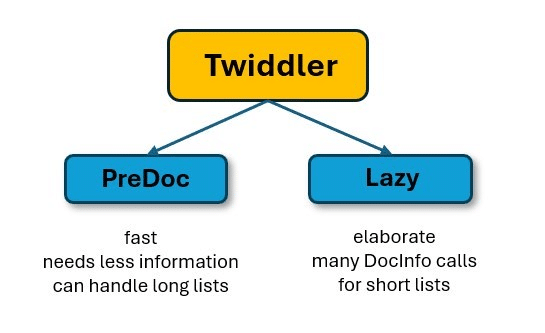

Varied paperwork point out that a number of hundred Twiddler techniques are in use. Consider a Twiddler as a plug-in much like these in WordPress.

Every Twiddler has its personal particular filter goal. They’re designed this manner as a result of they’re comparatively simple to create and don’t require adjustments to the advanced rating algorithms in Ascorer.

Modifying these algorithms is difficult and would contain in depth planning and programming attributable to potential unwanted side effects. In distinction, Twiddlers function in parallel or sequentially and are unaware of the actions of different Twiddlers.

There are mainly two varieties of Twiddlers.

- PreDoc Twiddlers can work with all the set of a number of hundred DocIDs as a result of they require little or no further info.

- In distinction, Twiddlers of the “Lazy” sort require extra info, for instance, from the PerDocData database. This takes correspondingly longer and is extra advanced.

For that reason, the PreDocs first cut back the posting record to considerably fewer entries after which begin with slower filters. This protects an unlimited quantity of computing capability and time.

Some Twiddlers modify the IR rating, both positively or negatively, whereas others modify the rating place immediately. Since our doc is new to the index, a Twiddler designed to provide current paperwork a greater likelihood of rating may, as an illustration, multiply the IR rating by an element of 1.7. This adjustment may transfer our doc from the 132nd place to the 81st place.

One other Twiddler enhances variety (strideCategory) within the SERPs by devaluing paperwork with related content material. Consequently, a number of paperwork forward of us lose their positions, permitting our pencil doc to maneuver up 12 spots to 69. Moreover, a Twiddler that limits the variety of weblog pages to a few for particular queries boosts our rating to 61.

Our web page obtained a zero (for “Yes”) for the CommercialScore attribute. The Mustang system recognized a gross sales intention throughout evaluation. Google probably is aware of that searches for “pencil” are regularly adopted by refined searches like “buy pencil,” indicating a industrial or transactional intent. A Twiddler designed to account for this search intent provides related outcomes and boosts our web page by 20 positions, shifting us as much as 41.

One other Twiddler comes into play, imposing a “page three penalty” that limits pages suspected of being spam to a most rank of 31 (Web page 3). The very best place for a doc is outlined by the BadURL-demoteindex attribute, which prevents rating above this threshold. Attributes like DemoteForContent, DemoteForForwardlinks and DemoteForBacklinks are used for this function. Consequently, three paperwork above us are demoted, permitting our web page to maneuver as much as Place 38.

Our doc may have been devalued, however to maintain issues easy, we’ll assume it stays unaffected. Let’s think about one final Twiddler that assesses how related our pencil web page is to our area based mostly on embeddings. Since our web site focuses completely on writing devices, this works to our benefit and negatively impacts 24 different paperwork.

As an example, think about a worth comparability web site with a various vary of matters however with one “good” web page about pencils. As a result of this web page’s matter differs considerably from the positioning’s general focus, it might be devalued by this Twiddler.

Attributes like siteFocusScore and siteRadius mirror this thematic distance. Consequently, our IR rating is boosted as soon as extra, and different outcomes are downgraded, shifting us as much as Place 14.

As talked about, Twiddlers serve a variety of functions. Builders can experiment with new filters, multipliers or particular place restrictions. It’s even potential to rank a consequence particularly both in entrance of or behind one other consequence.

Certainly one of Google’s leaked inside paperwork warns that sure Twiddler options ought to solely be utilized by specialists and after consulting with the core search group.

“If you think you understand how they work, trust us: you don’t. We’re not sure that we do either.”

– Leaked “Twiddler Quick Start Guide – Superroot” doc

There are additionally Twiddlers that solely create annotations and add these to the DocID on the way in which to the SERP. A picture then seems within the snippet, for instance, or the title and/or description are dynamically rewritten later.

If you happen to puzzled throughout the pandemic why your nation’s nationwide well being authority (such because the Division of Well being and Human Companies within the U.S.) constantly ranked first in COVID-19 searches, it was attributable to a Twiddler that enhances official sources based mostly on language and nation utilizing queriesForWhichOfficial.

You’ve little management over how Twiddler reorders your outcomes, however understanding its mechanisms may help you higher interpret rating fluctuations or “inexplicable rankings.” It’s useful to commonly assessment SERPs and word the varieties of outcomes.

For instance, do you constantly see solely a sure variety of discussion board or weblog posts, even with completely different search phrases? What number of outcomes are transactional, informational, or navigational? Are the identical domains repeatedly showing, or do they fluctuate with slight adjustments within the search phrase?

If you happen to discover that just a few on-line shops are included within the outcomes, it is perhaps much less efficient to strive rating with the same web site. As an alternative, think about specializing in extra information-oriented content material. Nonetheless, don’t bounce to conclusions simply but, because the NavBoost system will likely be mentioned later.

Google’s high quality raters and RankLab

A number of thousand high quality raters work for Google worldwide to judge sure search outcomes and take a look at new algorithms and/or filters earlier than they go “live.”

Google explains, “Their ratings don’t directly influence ranking.”

That is basically appropriate, however these votes do have a major oblique affect on rankings.

Right here’s the way it works: Raters obtain URLs or search phrases (search outcomes) from the system and reply predetermined questions, sometimes assessed on cellular units.

For instance, they is perhaps requested, “Is it clear who wrote this content and when? Does the author have professional expertise on this topic?” The solutions to those questions are saved and used to coach machine studying algorithms. These algorithms analyze the traits of fine and reliable pages versus much less dependable ones.

This method implies that as a substitute of counting on Google search group members to create standards for rating, algorithms use deep studying to determine patterns based mostly on the coaching supplied by human evaluators.

Let’s think about a thought experiment for example this. Think about folks intuitively fee a chunk of content material as reliable if it consists of an creator’s image, full title, and a LinkedIn biography hyperlink. Pages missing these options are perceived as much less reliable.

If a neural community is educated on varied web page options alongside these “Yes” or “No” rankings, it can determine this attribute as a key issue. After a number of optimistic take a look at runs, which usually final not less than 30 days, the community may begin utilizing this characteristic as a rating sign. Consequently, pages with an creator picture, full title, and LinkedIn hyperlink may obtain a rating enhance, probably via a Twiddler, whereas pages with out these options might be devalued.

Google’s official stance of not specializing in authors may align with this state of affairs. Nonetheless, leaked info reveals attributes like isAuthor and ideas similar to “author fingerprinting” via the AuthorVectors attribute, which makes the idiolect (the person use of phrases and formulations) of an creator distinguishable or identifiable – once more through embeddings.

Raters’ evaluations are compiled into an “information satisfaction” (IS) rating. Though many raters contribute, an IS rating is just accessible for a small fraction of URLs. For different pages with related patterns, this rating is extrapolated for rating functions.

Google notes, “A lot of documents have no clicks but can be important.” When extrapolation isn’t potential, the system mechanically sends the doc to raters to generate a rating.

The time period “golden” is talked about in relation to high quality raters, suggesting there is perhaps a gold normal for sure paperwork or doc varieties. It may be inferred that aligning with the expectations of human testers may assist your doc meet this gold normal. Moreover, it’s probably that a number of Twiddlers may present a major enhance to DocIDs deemed “golden,” probably pushing them into the highest 10.

High quality raters are sometimes not full-time Google workers and may match via exterior corporations. In distinction, Google’s personal specialists function throughout the RankLab, the place they conduct experiments, develop new Twiddlers and consider whether or not these or refined Twiddlers enhance consequence high quality or merely filter out spam.

Confirmed and efficient Twiddlers are then built-in into the Mustang system, the place advanced, computationally intensive and interconnected algorithms are used.

However what do customers need? NavBoost can repair that!

Our pencil doc hasn’t absolutely succeeded but. Inside Superroot, one other core system, NavBoost, performs a major function in figuring out the order of search outcomes. NavBoost makes use of “slices” to handle completely different knowledge units for cellular, desktop, and native searches.

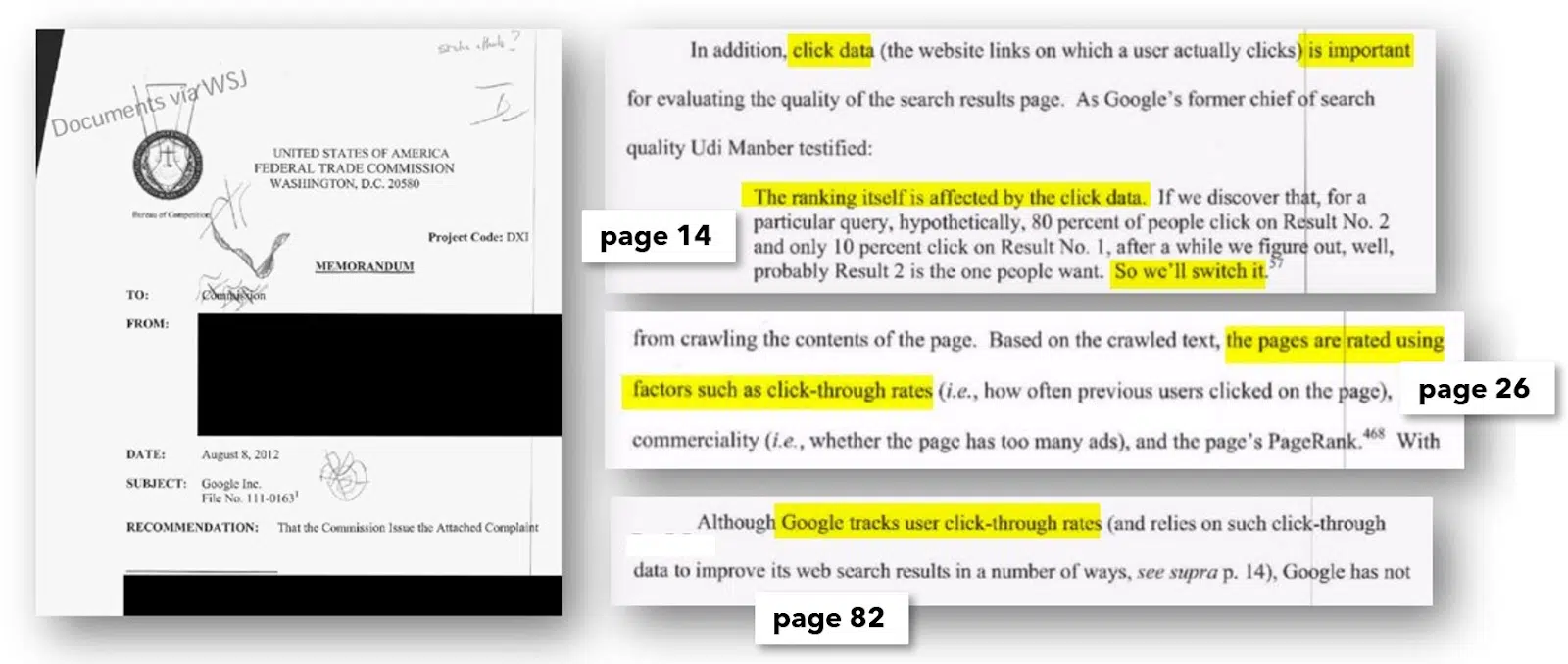

Though Google has formally denied utilizing person clicks for rating functions, FTC paperwork reveal an inside e-mail instructing that the dealing with of click on knowledge should stay confidential.

This shouldn’t be held towards Google, because the denial of utilizing click on knowledge includes two key facets. Firstly, acknowledging using click on knowledge may provoke media outrage over privateness issues, portraying Google as a “data octopus” monitoring our on-line exercise. Nonetheless, the intent behind utilizing click on knowledge is to acquire statistically related metrics, to not monitor particular person customers. Whereas knowledge safety advocates may view this in a different way, this attitude helps clarify the denial.

FTC paperwork affirm that click on knowledge is used for rating functions and regularly point out the NavBoost system on this context (54 instances within the April 18, 2023 listening to). An official listening to in 2012 additionally revealed that click on knowledge influences rankings.

It has been established that each click on habits on search outcomes and site visitors on web sites or webpages affect rankings. Google can simply consider search habits, together with searches, clicks, repeat searches and repeat clicks, immediately throughout the SERPs.

There was hypothesis that Google may infer area motion knowledge from Google Analytics, main some to keep away from utilizing this method. Nonetheless, this idea has limitations.

First, Google Analytics doesn’t present entry to all transaction knowledge for a website. Extra importantly, with over 60% of individuals utilizing the Google Chrome browser (over three billion customers), Google collects knowledge on a considerable portion of internet exercise.

This makes Chrome a vital element in analyzing internet actions, as highlighted in hearings. Moreover, Core Internet Vitals indicators are formally collected via Chrome and aggregated into the “chromeInTotal” worth.

The detrimental publicity related to “monitoring” is one motive for the denial, whereas one other is the priority that evaluating click on and motion knowledge may encourage spammers and tricksters to manufacture site visitors utilizing bot techniques to govern rankings. Whereas the denial is perhaps irritating, the underlying causes are not less than comprehensible.

- A number of the metrics which are saved embrace badClicks and goodClicks. The size of time a searcher stays on the goal web page and the data on what number of different pages they view there and at what time (Chrome knowledge) are more than likely included on this analysis.

- A brief detour to a search consequence and a fast return to the search outcomes and additional clicks on different outcomes can enhance the variety of dangerous clicks. The search consequence that had the final “good” click on in a search session is recorded because the lastLongestClick.

- The information is squashed (i.e., condensed), in order that it’s statistically normalized and fewer prone to manipulation.

- If a web page, a cluster of pages or the beginning web page of a website typically has good customer metrics (Chrome knowledge), this has a optimistic impact through NavBoost. By analyzing motion patterns inside a website or throughout domains, it’s even potential to find out how good the person steerage is through the navigation.

- Since Google measures complete search periods, it’s theoretically even potential in excessive circumstances to acknowledge {that a} utterly completely different doc is taken into account appropriate for a search question. If a searcher leaves the area that they clicked on within the search consequence inside a search and goes to a different area (as a result of it might even have been linked from there) and stays there because the recognizable finish of the search, this “end” doc might be flushed to the entrance through NavBoost sooner or later, supplied it’s accessible within the choice ring set. Nonetheless, this might require a powerful statistically related sign from many searchers.

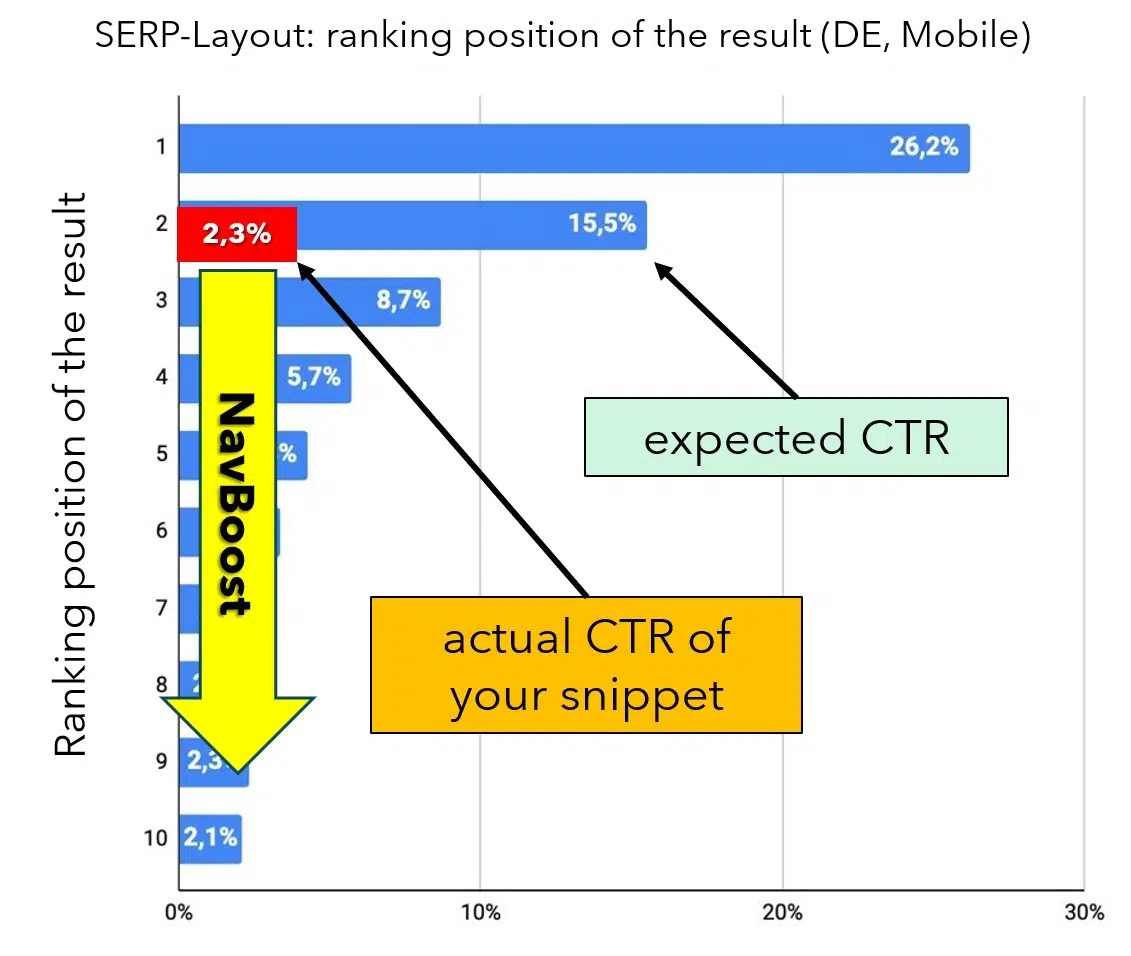

Let’s first look at clicks in search outcomes. Every rating place within the SERPs has a median anticipated click-through fee (CTR), serving as a efficiency benchmark. For instance, in keeping with an evaluation by Johannes Beus introduced at this 12 months’s CAMPIXX in Berlin, the natural Place 1 receives a median of 26.2% of clicks, whereas Place 2 will get 15.5%.

If a snippet’s precise CTR considerably falls wanting the anticipated fee, the NavBoost system registers this discrepancy and adjusts the rating of the DocIDs accordingly. If a consequence traditionally generates considerably extra or fewer clicks than anticipated, NavBoost will transfer the doc up or down within the rankings as wanted (see Determine 6).

This method is smart as a result of clicks basically signify a vote from customers on the relevance of a consequence based mostly on the title, description and area. This idea is even detailed in official paperwork, as illustrated in Determine 7.

Since our pencil doc remains to be new, there are not any accessible CTR values but. It’s unclear whether or not CTR deviations are ignored for paperwork with no knowledge, however this appears probably, because the objective is to include person suggestions. Alternatively, the CTR may initially be estimated based mostly on different values, much like how the standard issue is dealt with in Google Adverts.

- search engine optimisation specialists and knowledge analysts have lengthy reported that they’ve observed the next phenomenon when comprehensively monitoring their very own click-through charges: If a doc for a search question newly seems within the high 10 and the CTR falls considerably wanting expectations, you possibly can observe a drop in rating inside a number of days (relying on the search quantity).

- Conversely, the rating usually rises if the CTR is considerably greater in relation to the rank. You solely have a short while to react and modify the snippet if the CTR is poor (normally by optimizing the title and outline) in order that extra clicks are collected. In any other case, the place deteriorates and is subsequently not really easy to regain. Assessments are regarded as behind this phenomenon. If a doc proves itself, it might keep. If searchers don’t prefer it, it disappears once more. Whether or not that is really associated to NavBoost is neither clear nor conclusively provable.

Primarily based on the leaked info, it seems that Google makes use of in depth knowledge from a web page’s “environment” to estimate indicators for brand new, unknown pages.

As an example, NearestSeedversion means that the PageRank of the house web page HomePageRank_NS is transferred to new pages till they develop their very own PageRank. Moreover, pnavClicks appears to be used to estimate and assign the chance of clicks via navigation.

Calculating and updating PageRank is time-consuming and computationally intensive, which is why the PageRank_NS metric is probably going used as a substitute. “NS” stands for “nearest seed,” that means {that a} set of associated pages shares a PageRank worth, which is quickly or completely utilized to new pages.

It’s possible that values from neighboring pages are additionally used for different important indicators, serving to new pages climb the rankings regardless of missing important site visitors or backlinks. Many indicators will not be attributed in real-time however might contain a notable delay.

- Google itself set a great instance of freshness throughout a listening to. For instance, should you seek for “Stanley Cup,” the search outcomes sometimes characteristic the well-known mug. Nonetheless, when the Stanley Cup ice hockey video games are actively happening, NavBoost adjusts the outcomes to prioritize details about the video games, reflecting adjustments in search and click on habits.

- Freshness doesn’t confer with new (i.e., “fresh”) paperwork however to adjustments in search habits. In response to Google, there are over a billion (that’s not a typo) new behaviors within the SERPs on daily basis! So each search and each click on contributes to Google’s studying. The belief that Google is aware of every thing about seasonality might be not appropriate. Google acknowledges fine-grained adjustments in search intentions and consistently adapts the system – which creates the phantasm that Google really “understands” what searchers need.

The clicking metrics for paperwork are apparently saved and evaluated over a interval of 13 months (one month overlap within the 12 months for comparisons with the earlier 12 months), in keeping with the newest findings.

Since our hypothetical area has robust customer metrics and substantial direct site visitors from promoting, as a widely known model (which is a optimistic sign), our new pencil doc advantages from the favorable indicators of older, profitable pages.

Consequently, NavBoost elevates our rating from 14th to fifth place, putting us within the “blue ring” or high 10. This high 10 record, together with our doc, is then forwarded to the Google Internet Server together with the opposite 9 natural outcomes.

- Opposite to expectations, Google doesn’t really ship many customized search outcomes. Assessments have in all probability proven that modeling person habits and making adjustments to it delivers higher outcomes than evaluating the private preferences of particular person customers.

- That is exceptional. The prediction through neural networks is now higher suited to us than our personal browsing and clicking historical past. Nonetheless, particular person preferences, similar to a desire for video content material, are nonetheless included within the private outcomes.

The GWS: The place every thing involves an finish and a brand new starting

The Google Internet Server (GWS) is accountable for assembling and delivering the search outcomes web page (SERP). This consists of 10 blue hyperlinks, together with adverts, photographs, Google Maps views, “People also ask” sections and different parts.

The Tangram system handles geometric house optimization, calculating how a lot house every factor requires and what number of outcomes match into the accessible “boxes.” The Glue system then arranges these parts of their correct locations.

Our pencil doc, at the moment in fifth place, is a part of the natural outcomes. Nonetheless, the CookBook system can intervene on the final second. This technique consists of FreshnessNode, InstantGlue (reacts in durations of 24 hours with a delay of round 10 minutes) and InstantNavBoost. These parts generate further indicators associated to topicality and may modify rankings within the closing moments earlier than the web page is displayed.

Let’s say a German TV program about 250 years of Faber-Castell and the myths surrounding the phrase “pencil” begins to air. Inside minutes, hundreds of viewers seize their smartphones or tablets to look on-line. This can be a typical state of affairs. FreshnessNode detects the surge in searches for “pencil” and, noting that customers are searching for info slightly than making purchases, adjusts the rankings accordingly.

On this distinctive state of affairs, InstantNavBoost removes all transactional outcomes and replaces them with informational ones in actual time. InstantGlue then updates the “blue ring,” inflicting our beforehand sales-oriented doc to drop out of the highest rankings and get replaced by extra related outcomes.

Unlucky as it might be, this hypothetical finish to our rating journey illustrates an essential level: reaching a excessive rating isn’t solely about having an amazing doc or implementing the best search engine optimisation measures with high-quality content material.

Rankings could be influenced by a wide range of elements, together with adjustments in search habits, new indicators for different paperwork and evolving circumstances. Due to this fact, it’s essential to acknowledge that having a wonderful doc and doing a great job with search engine optimisation is only one a part of a broader and extra dynamic rating panorama.

The method of compiling search outcomes is extraordinarily advanced, influenced by hundreds of indicators. With quite a few checks carried out dwell by SearchLab utilizing Twiddler, even backlinks to your paperwork could be affected.

These paperwork is perhaps moved from HiveMind to much less important ranges, similar to SSDs and even TeraGoogle, which might weaken or remove their affect on rankings. This may shift rating scales even when nothing has modified with your individual doc.

Google’s John Mueller has emphasised {that a} drop in rating usually doesn’t imply you’ve accomplished something unsuitable. Modifications in person habits or different elements can alter how outcomes carry out.

As an example, if searchers begin preferring extra detailed info and shorter texts over time, NavBoost will mechanically modify rankings accordingly. Nonetheless, the IR rating within the Alexandria system or Ascorer stays unchanged.

One key takeaway is that search engine optimisation should be understood in a broader context. Optimizing titles or content material received’t be efficient if a doc and its search intent don’t align.

The affect of Twiddlers and NavBoost on rankings can usually outweigh conventional on-page, on-site or off-site optimizations. If these techniques restrict a doc’s visibility, further on-page enhancements can have minimal impact.

Nonetheless, our journey doesn’t finish on a low word. The affect of the TV program about pencils is momentary. As soon as the search surge subsides, FreshnessNode will now not have an effect on our rating, and we’ll settle again at fifth place.

As we restart the cycle of amassing click on knowledge, a CTR of round 4% is anticipated for Place 5 (based mostly on Johannes Beus from SISTRIX). If we will keep this CTR, we will anticipate staying within the high ten. All will likely be nicely.

Key search engine optimisation takeaways

- Diversify site visitors sources: Make sure you obtain site visitors from varied sources, not simply serps. Visitors from much less apparent channels, like social media platforms, can be useful. Even when Google’s crawler can’t entry sure pages, Google can nonetheless observe what number of guests come to your web site via platforms like Chrome or direct URLs.

- Construct model and area consciousness: At all times work on strengthening your model or area title recognition. The extra acquainted persons are together with your title, the extra probably they’re to click on in your web site in search outcomes. Rating for a lot of long-tail key phrases may also enhance your area’s visibility. Leaks counsel that “site authority” is a rating sign, so constructing your model’s fame may help enhance your search rankings.

- Perceive search intent: To raised meet your guests’ wants, attempt to perceive their search intent and journey. Use instruments like Semrush or SimilarWeb to see the place your guests come from and the place they go after visiting your web site. Analyze these domains – do they provide info that your touchdown pages lack? Progressively add this lacking content material to turn into the “final destination” in your guests’ search journey. Keep in mind, Google tracks associated search periods and is aware of exactly what searchers are in search of and the place they’ve been looking.

- Optimize your titles and descriptions to enhance CTR: Begin by reviewing your present CTR and making changes to reinforce click on attraction. Capitalizing a number of essential phrases may help them stand out visually, probably boosting CTR; take a look at this method to see if it really works for you. The title performs a important function in figuring out whether or not your web page ranks nicely for a search term, so optimizing it ought to be a high precedence.

- Consider hidden content material: If you happen to use accordions to “hide” essential content material that requires a click on to disclose, test if these pages have a higher-than-average bounce fee. When searchers can’t instantly see they’re in the best place and have to click on a number of instances, the chance of detrimental click on indicators will increase.

- Take away underperforming pages: Pages that no one visits (internet analytics) or that don’t obtain a great rating over longer durations of time ought to be eliminated if obligatory. Unhealthy indicators are additionally handed on to neighboring pages! If you happen to publish a brand new doc in a “bad” web page cluster, the brand new web page has few probabilities. “deltaPageQuality” apparently really measures the qualitative distinction between particular person paperwork in a website or cluster.

- Improve web page construction: A transparent web page construction, simple navigation and a powerful first impression are important for reaching high rankings, usually because of NavBoost.

- Maximize engagement: The longer guests keep in your web site, the higher the indicators your area sends, which advantages your whole subpages. Intention to be the ultimate vacation spot by offering all the data they want so guests received’t have to look elsewhere.

- Increase current content material slightly than consistently creating new ones: Updating and enhancing your present content material could be simpler. ContentEffortScore measures the trouble put into making a doc, with elements like high-quality photographs, movies, instruments and distinctive content material all contributing to this essential sign.

- Align your headings with the content material they introduce: Be certain that (intermediate) headings precisely mirror the textual content blocks that comply with. Thematic evaluation, utilizing methods like embeddings (textual content vectorization), is simpler at figuring out whether or not headings and content material match accurately in comparison with purely lexical strategies.

- Make the most of internet analytics: Instruments like Google Analytics enables you to observe customer engagement successfully and determine and handle any gaps. Pay specific consideration to the bounce fee of your touchdown pages. If it’s too excessive, examine potential causes and take corrective actions. Keep in mind, Google can entry this knowledge via the Chrome browser.

- Goal much less aggressive key phrases: You may as well deal with rating nicely for much less aggressive key phrases first and thus extra simply construct up optimistic person indicators.

- Domesticate high quality backlinks: Give attention to hyperlinks from current or high-traffic pages saved in HiveMind, as these present extra useful indicators. Hyperlinks from pages with little site visitors or engagement are much less efficient. Moreover, backlinks from pages throughout the similar nation and people with thematic relevance to your content material are extra helpful. Remember that “toxic” backlinks, which negatively affect your rating, do exist and ought to be prevented.

- Take note of the context surrounding hyperlinks: The textual content earlier than and after a hyperlink, not simply the anchor textual content itself, are thought of for rating. Be sure that the textual content naturally flows across the hyperlink. Keep away from utilizing generic phrases like “click here,” which has been ineffective for over twenty years.

- Pay attention to the Disavow device’s limitations: The Disavow device, used to invalidate dangerous hyperlinks, will not be talked about within the leak in any respect. Evidently algorithms don’t think about it, and it serves primarily a documentary function for spam fighters.

- Think about creator experience: If you happen to use creator references, guarantee they’re additionally acknowledged on different web sites and display related experience. Having fewer however extremely certified authors is healthier than having many much less credible ones. In response to a patent, Google can assess content material based mostly on the creator’s experience, distinguishing between specialists and laypeople.

- Create unique, useful, complete and well-structured content material: That is particularly essential for key pages. Exhibit your real experience on the subject and, if potential, present proof of it. Whereas it’s simple to have somebody write content material simply to have one thing on the web page, setting excessive rating expectations with out actual high quality and experience will not be real looking.

A model of this text was initially revealed in German in August 2024 in Web site Boosting, Situation 87.

Contributing authors are invited to create content material for Search Engine Land and are chosen for his or her experience and contribution to the search neighborhood. Our contributors work underneath the oversight of the editorial employees and contributions are checked for high quality and relevance to our readers. The opinions they categorical are their very own.